10+ years of building, inventing, and shipping 0-to-1 products in AI, AR, and smart glasses at Easel, Snapchat, and Stanford.

I'm currently a Co-founder, CEO, and Chief Product Officer at Easel AI, Inc., think photo-based Sora app, but launched two years ago. Previously, I was a senior research scientist and a founding member of Snapchat's HCI Group, a product incubator-like org. At Snap, I worked with Bobby Murphy (Snap co-founder) and shipped a range of products using AI and AR for communication, creativity, education, games, and smart glasses, engaging millions of users.

Before Snap, I was a postdoctoral fellow at Stanford University, where I developed crowdsourcing products for scaling open-ended complex goals, such as research, teaching, time-critical campaigns, and frictionless data collection for AI. I spent the majority of my grad school time at the Stanford Human-Computer Interaction (HCI) Group and worked with various groups at LANL, Microsoft Research, PARC, MobiSocial, Inc., and IBM Research. I've also had work experience at Google SoC, OpenStreetMap, OLPC, and Accenture Tech Labs. With a Ph.D. in CS focused on HCI, I thrive in ambiguity and enjoy solving hard, user-centered problems through product design, user research, and AI.

Some key stats include 50+ patents on novel product concepts, 50+ HCI publications (3x awards), 50+ mentees, 2,500+ people orchestrated, $2.65M+ raised, 20+ press mentions, 50+ talks/panels, 10+ as PC, numerous products launched, and several teams led. My work has been featured at popular venues such as TechCrunch (2x), The Verge, MIT Technology Review, Stanford News, Harvard Business Review, New Scientist, WIRED, and Times Square billboard in NYC.

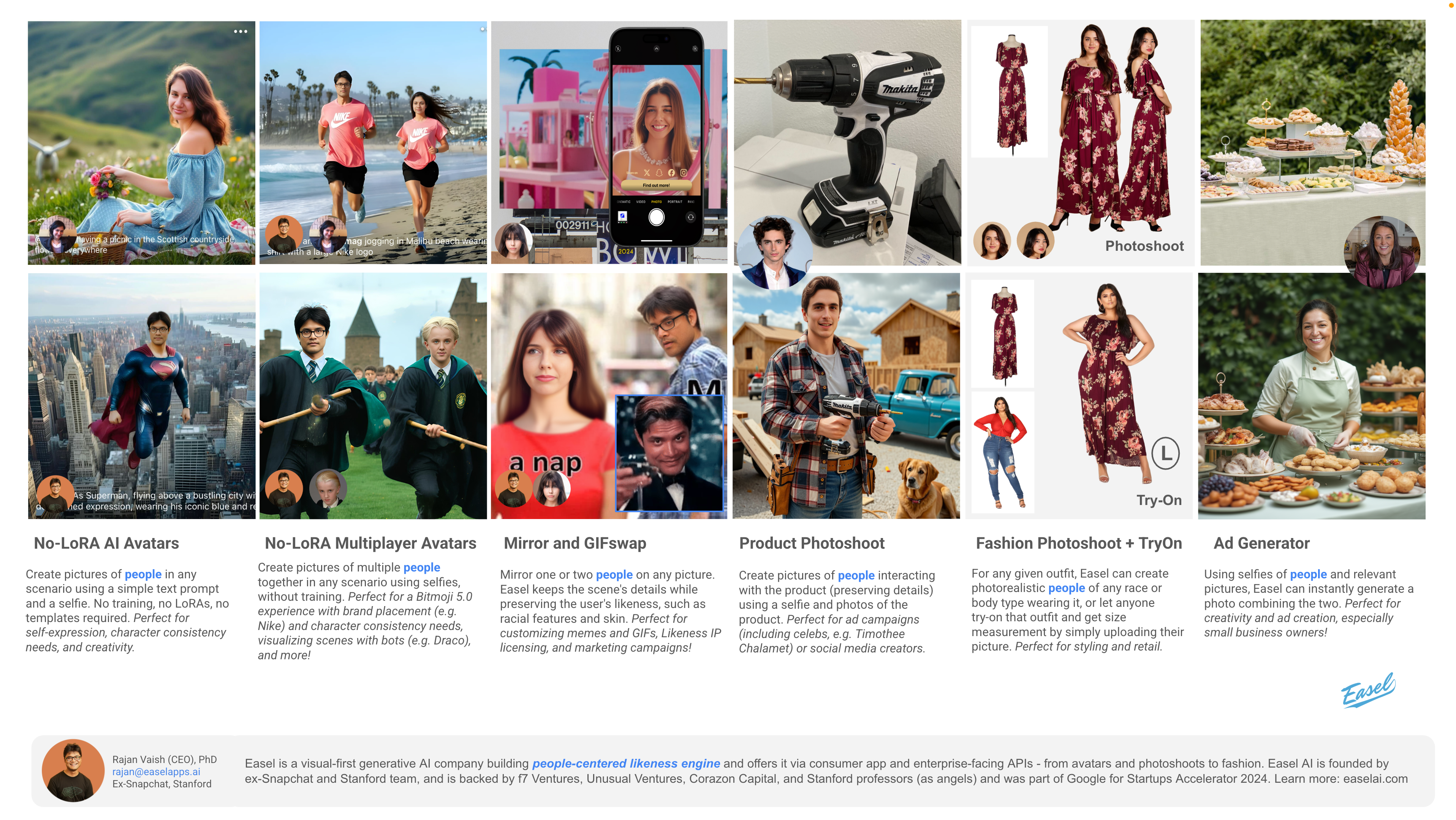

Easel: Launched a "photo-based Sora app" two years ago

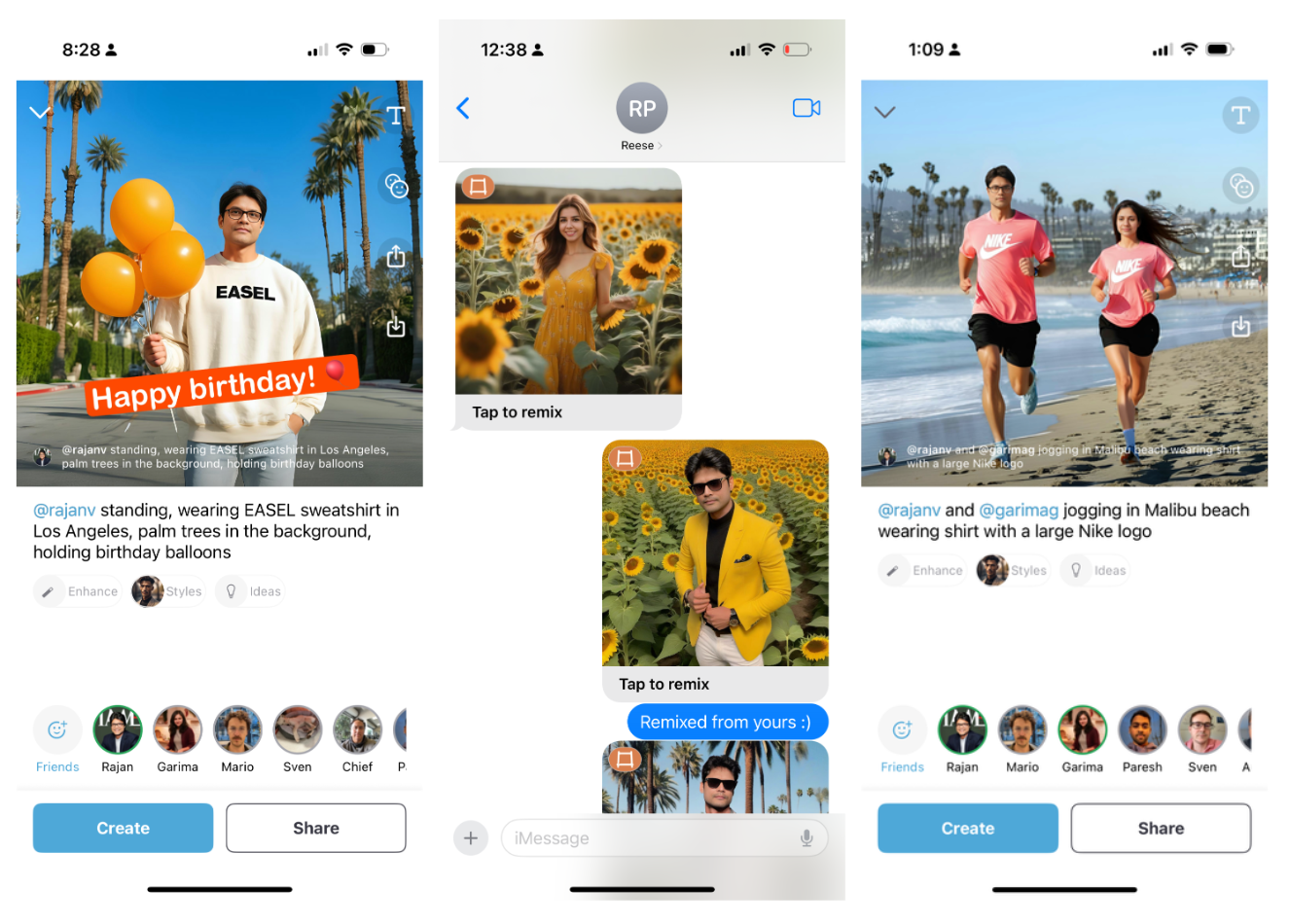

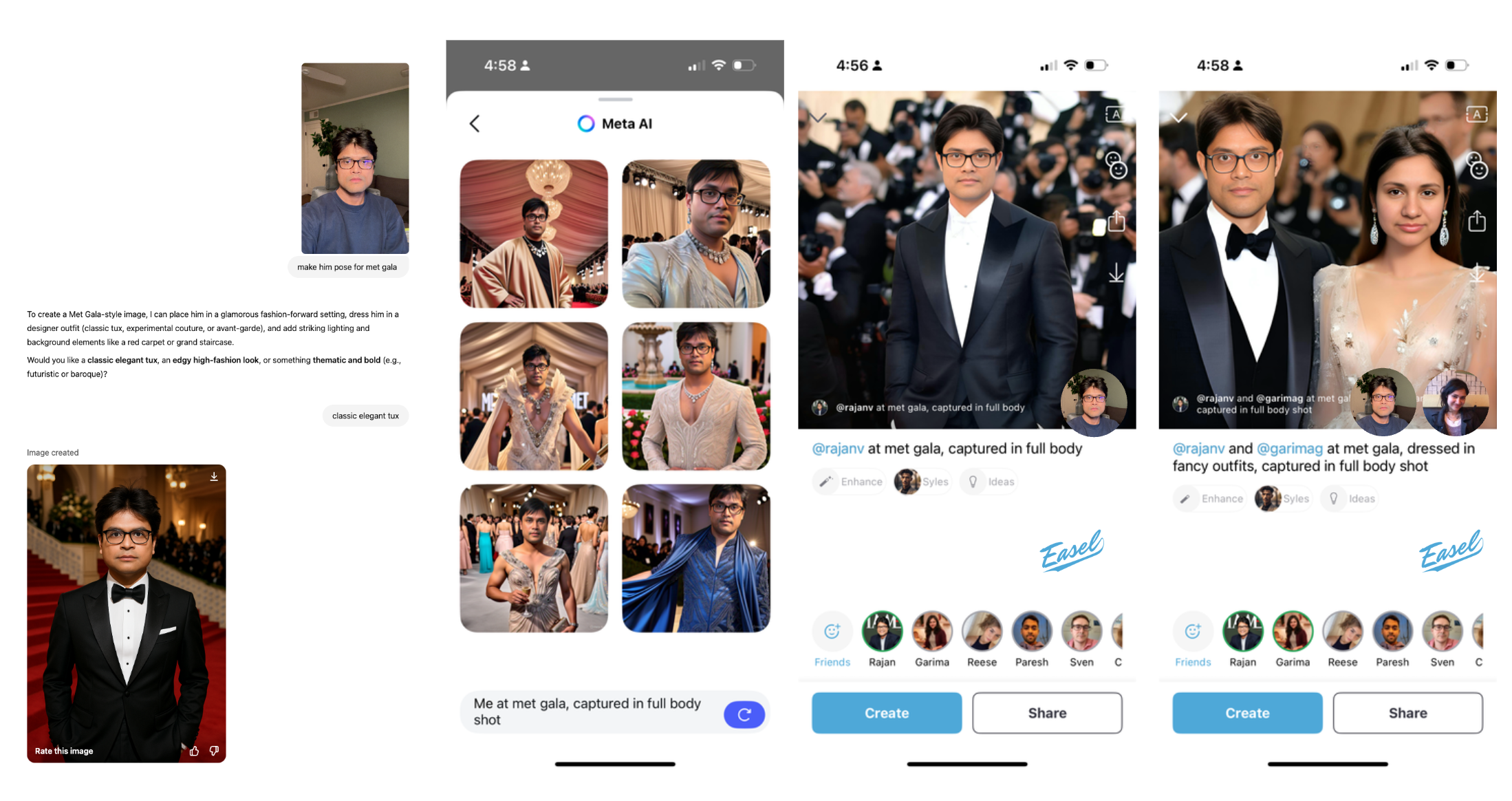

In 2023, we launched Easel, one of the first social AI products — an AI avatar-based social chat app, where users can create/remix multiplayer pictures with friends and express themselves in fun and imaginative ways, directly from iMessage. TechCrunch featured us and called it an "AI-first, next-generation Bitmoji" app. Soon after that, Apple announced that they'll be debuting a similar iMessage feature, TechCrunch featured us again and quoted "It’s reminiscent of something a group of ex-Snap researchers were working on with their startup Easel, which was something of a next-generation Bitmoji" — validating our product approach. Later, several large consumer companies incorporated Easel-like experiences and made it mainstream. Given user feedback and insights, we doubled down on the main app experience, focusing on friends and fashion, akin to today’s OpenAI Sora app, which closely mirrors Easel’s product design concepts introduced two years ago, from the UI elements to the UX flow.

While Easel-like experiences are common now, when we were first building Easel in 2023 (feels like ages ago in the AI-timeline), there were no out-of-the-box solutions, so we had to invent several product concepts (to design the app) and AI approaches (to power the app) together, therefore, I am running a B2C product company, an AI Lab, and a B2B APIs enterprise at the same time.

We raised $2.65M in visual consumer AI from Unusual Ventures, f7 Ventures (now Perplexity Fund), and Corazon Capital, whose Easel partners have co-founded and run iconic companies such as Nextdoor and OkCupid/Match Group and held senior leadership positions at companies like Meta and Tinder. A few Stanford professors also supported Easel's vision as angels. In 2024, Easel was accepted into the inaugural cohort of Google for Startups Accelerator: AI First North America. Easel is also a member of NVIDIA's Inception Program, Digital Ocean's Hatch Program, Cloudflare's, Microsoft Azure's, Nebius', AWS' Startup Programs, and an official partner with fal.ai and Replicate (now Cloudflare).

Easel on iMessage sizzle

Multiplayer launched

Easel on iMessage walkthrough

New Easel for fashion and friends

Easel talk at Google for Startups

Easel App on iOS App Store (B2C)

Easel was launched as an AI avatar-based social chat app that runs on iMessage. Think "AI-first Snapchat on iMessage", one that bypasses the social graph constraints by building on America’s largest “social network” for teens - iMessage. However, after Apple removed the iMessage Apps tray, we built upon user feedback and insights, and doubled down on the main app experience, focusing on friends and fashion for fun and function.

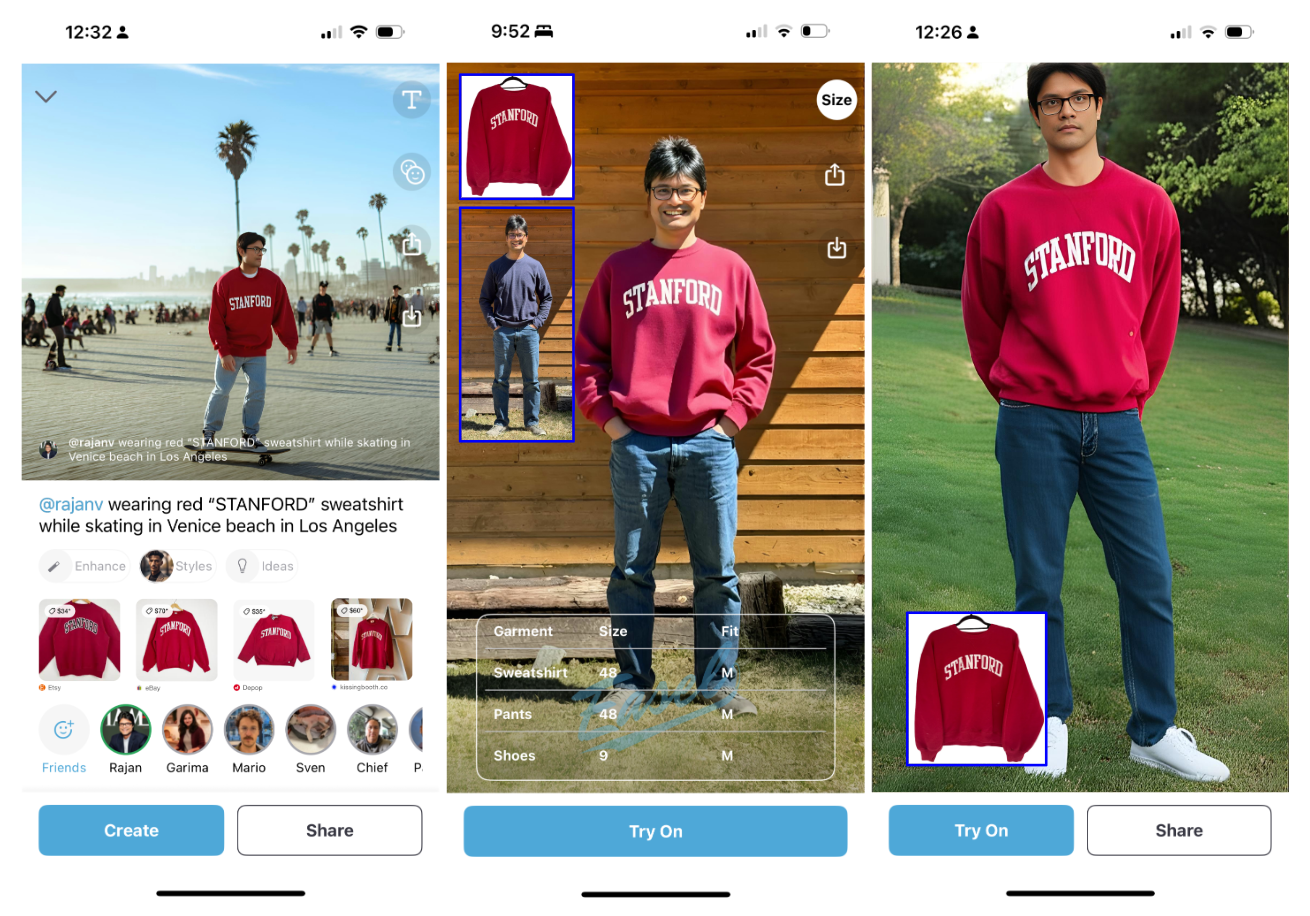

Easel app enables two key product experiences — Friends and Fashion — for each, we invented novel product concepts/formats (like Snapchat invented Stories), designed intricate UI/UX flows, and conducted UX research with mixed-methods approaches.

- Friends product: Using just a single selfie, a user can onboard the app and create pictures (Easels) of themselves using simple text prompts. If they have friends who follow each other on the app, they can create multiplayer pictures with them. We created novel user control and consent mechanisms, such that users have full agency to revoke the access and full visibility into pictures created with them.

Users can send and remix Easels on iMessage or within the app's public feed. The public feed not only allows users to share their Easels but also enables newcomers to get inspired and get started with the app. We also launched "Easel of the day", where users wake up to contextually relevant pictures pre-generated for them. This not only inspired and educated them about Easel's usecases, but also increased their native sharing behavior.

Easel users generally create pictures to greet, express themselves, imagine scenes with friends or romantic partners, and most importantly, they create fashion pictures of themselves in unique outfits. At its peak, Easel saw a D30 retention (3mo usage) of 45% with over 90% clearing the onboarding funnel, and on average, each user has created about a hundred pictures to date.

- Fashion product: Think "Shazam for fashion try-on", where taking a camera-first approach, users can capture pictures of outfits while shopping online, or at a mall, of a friend, or a celebrity on TV, then see the final look on their own (body) picture. For each outfit, Easel generates two key outputs at once (to minimize decision-making on the user's end and reduce cognitive load): 1. Try-On on their own photo for function and utility; 2. Photoshoot avatar in an aesthetic scene for fun and social sharing. Easel's Try-On works on outfits worn by anyone or laid flat on the ground, isolated or combined with other garments. Try-On is also complemented by Size Estimator.

The app itself has a curated collection of "Outfit ideas" and users can remix outfits shared on the feed, creating a cascading effect. Enabling users to imagine outfits with friends or try on real outfits unlocks fun, function, and commerce-related opportunities.

Talks, Podcasts, Press, and Partnerships

- [Podcast] Snap Mafia: Rajan Vaish, founder of Easel, on building at the intersection of social and Gen AI and what he learned doing research at Stanford and Snap, ADSN By James Borow and Daniel Druger 05/24

- [Press] With Easel, ex-Snap researchers are building the next-generation Bitmoji thanks to AI, TechCrunch 04/24

- [Press] Apple debuts AI-generated … Bitmoji, TechCrunch 06/24

- [Feature] Easel featured in Google for Startups Accelerator official announcement, 04/24

- [Feature] Easel featured in a16z market map of AI x Social 02/24

- [Feature] Easel featured at Times Square billboard in NYC!

- [Startup Talks] Silicon Valley Bank (Easel was selected as top 10 LA startups for the SVB event 2024)

- [Startup Talks] Presented Easel at Google for Startups Accelerator AI First Demo Day at Google HQ 06/24

- [Panel] Planting the (Consumer Tech) Seed Panel hosted by f7 Ventures at a16z New York Tech Week 2024

- [Panel] "The Art and Science of Content: Balancing AI efficiency with Human Creativity" Panel hosted by Lemonlight in Los Angeles, CA 2024

- [Panel] The Future is Personal: Building for the Individual at Scale Panel hosted by Unusual Ventures and Stanford Founders at Stanford University, 2025

- [Partnerships] Amazon partnered with Easel twice for interactive AI experiences, once in New York during the Tech Week and another time in Silicon Valley during TechEx conference.

- [Academic Talks] Columbia University, University of Michigan, University of British Columbia, University of North Carolina Chapel Hill, University of California Irvine, University of Massachusetts Amherst

- [Academic Curriculum] Easel as a case study was part of a marketing class at Darla Moore School of Business at the University of South Carolina

Easel's suite of AI products

Easel's suite of AI products Easel AI Avatars (Likeness Engine)

Easel AI Avatars (Likeness Engine) Easel quality vs. Meta AI and 4o

Easel quality vs. Meta AI and 4o Easel Fashion Try-On and Avatars

Easel Fashion Try-On and AvatarsEasel AI Lab and APIs (B2B)

Developing the best-in-the-class AI Avatar is existential to the Easel app. In the process of building a high-quality avatar for the app, we made several technical breakthroughs and launched a range of AI products. Later, we partnered with fal.ai and Replicate, two of the world's largest AI model inference platforms, and shipped six "people-centered" visual AI models as APIs, for consumer (B2C) and commerce (B2B) use-cases. We observed half-a-million API calls by the end of 2025. To date, we've built:

- LoRAs-based AI avatars: Back in 2023, when every app required users to upload a variety of pictures from their camera roll, Easel just required a series of selfies for a frictionless onboarding experience - balancing product UX with technical limitations. We developed a proprietary approach that generated avatars with a high degree of likeness and prompt adherence, without the issues of overfitting that were expected with just selfies as the training data. Replaced by No-LoRA avatars.

- No-LoRA AI avatars (Likeness Engine): Easel became one of the first companies to generate single and multiplayer pictures using just an individual's selfie. We developed a proprietary approach that requires no training time, no LoRAs, no complex infrastructure, and is agnostic to any model to facilitate a plug-and-play approach. The facial resemblance quality of which exceeds the likes of the largest companies in this space. A high-quality likeness engine that can visualize specific people in any setting, with anyone as avatars, unlocked a range of use cases in consumer and commerce, from fashion to photoshoots and ad generators.

- Fashion Try-On, Avatars, Photoshoot, and Size Estimator: Leveraging our "likeness engine" and fine-tuning models, we built a range of fashion-related AI products. Easel preserves outfit details, faces, and body shape. We directly engaged with fashion brands like Hugo Boss in Germany, and the API is already being used by existing fashion platforms.

- Product Photoshoot and Ad Generator: After getting pull for commerce usecases, to visualize people next to products for ads and marketing content, we built Product Photoshoot and Ad Generator. We developed a proprietary approach called "Magic blend" to preserve the textual details of the product.

B2B Partnerships

- fal.ai partnered with Easel to launch six models 2025

- Replicate partnered with Easel to launch AI avatars and Mirror models 2025

Snap: Led social and creativity focussed AR and AI products and games for Snapchat and Spectacles

At Snap Research, I incubated and shipped several AR social products on the phone and smart glasses. I invented new product concepts and my Snap work alone has resulted in 15+ top-tier publications, 50+ patents, and the public release of official Snapchat lenses that have led to the engagement of millions of users. To date, I have mentored 30+ research interns. As a founding member of the Human-Computer Interaction (HCI) group, a product and startup incubator-like org, I interacted closely with the Snap co-founder and CTO, Bobby Murphy, and regularly presented my work to C-level and VP-level executives.

At Snap, I collaborated with over 10 product teams across the company and led and managed the product development life cycle of many products — from ideation to design, development, deployment, UX research, A/B Testing, and Mixed-Methods evaluation — orchestrating teams of research engineers, scientists, designers, interns, and product team partners. In particular, I have worked on the following initiatives that led to several products:

Wearable communication and AR products for friends

New form factors unlock new forms of communication. It is the form factor of smartphones that enabled camera-first apps like Snapchat to exist and thrive. Therefore, it is inevitable that when smartglasses will be widely adopted, it’ll give rise to new Snapchats of the smartglasses world. But what would the future of AR communication look like? To answer this question, we built products that levearages the hands-free form factor of smartglasses — where a camera can see anytime, a screen can project anytime and a computer can sense anytime. I primarily led and launched the following products.

- Friendscope on Spectacles enables near-live streaming experience on hardware constrained, lightweight commercial camera glasses. A key component of the product was approved by Evan Spiegel and transitioned to the Spectacles product team. Learn more.

- ARcall on Spectacles enables live, synchornous AR-based calling system on smartglasses and smartphones. Learn more.

- ARmessenger enables asynchronous AR-based messaging system that relies on location, time and visual triggers to create contextual and semantically relevant experiences. Learn more.

- Social Wormholes on Spectacles enables a world of scalable ubiquitous computing where people can interconnect any, and any number of objects and physical spaces around them to stay in touch. Learn more.

- Ephemeral AR messaging app on Snapchat enables communication beyond photos/videos, where users can send and receive AR clones of objects around them, as if they were transmitting those objects via Wormholes. This lens was officially launched by Snapchat, where in over 185,000 sessions, 85% of times people sent clones to their friends.

- Memento Player enables a system that allows one to step inside and interact with a volumetric AR moment — from freezing the time to speeding it up — with anyone around the world from multiple perspectives. The project rapidly gained interest from the leadership of various teams within Snap, ranging from deployments at festivals to amusement parks.

- Analyzing the camera glasses study aimed to understand how people use smart glasses in the real world.

Publications

- Nicolas, M; Smith, B.A; Vaish, R. “Friendscope: Exploring In-the-Moment Experience Sharing on Camera Glasses via a Shared Camera”, ACM CSCW 2022. (project page, video)

- Surale, H; Tham, YJ; Smith, B.A; Vaish, R. “ARcall: Exploring Augmented Reality-Based Real-Time Communication”, ACM AHs 2022, Munich, Germany. (video)

- Lee, K; Li, H; Wellyanto, R.M; Tham, YJ; Liu, F; Monroy-Hernandez, A; Smith, B.A; Vaish, R. “Exploring Immersive Interpersonal Communication via AR”, ACM CSCW 2022. (video)

- Bipat, T; Bos, M.W; Vaish, R; Monroy-Hernandez, A. “Analyzing the use of camera glasses in the wild”, ACM CHI 2019.

- Kratz, S; Monroy-Hernandez, A; Vaish, R. “What’s Cooking? Olfactory Sensing Using Off-the-Shelf Components”, ACM UIST 2022 Posters, Bend OR.

- Leong, J; Seow, O; Fang, C.M; Tang, B.J; Vaish, R; Maes, P. “Wemoji: Towards Designing Complementary Communication Systems in Augmented Reality”, ACM UIST 2022 Posters, Bend OR.

- Ritchie, J; Liu, Y; Kratz, S; Sra, M; Smith, B.A; Monroy-Hernandez, A; Vaish, R. “Memento Player: Shared Multi-Perspective Playback of Volumetrically-Captured Moments in Augmented Reality”, ACM CHI EA 2023. (video)

- Leong, J; Teng, Y; Liu, X; Jun, H; Kratz, S; Tham, YJ; Monroy-Hernandez, A; Smith, B.A; Vaish, R. “Social Wormholes: Exploring Preferences and Opportunities for Distributed and Physically-Grounded Social Connections”, ACM CSCW 2023, Minneapolis, MN, USA. (project page, video)

Playful co-located AR games for friends, kids and pets

It’s common to spend hours on your phone connecting with people who are somewhere else while disconnecting from those right beside you. But what if you used your phone to connect with those in your space? We reimagine the role of technology with the goal of bringing people in the same space together. A lot of tech aims to build better relationships by decreasing the quantity of screen time. Through the following products/games (listed below), our mission is to do it by improving the quality of screen time instead. Learn more.

- Project IRL explores novel ways of supporting in-person social interactions with a suite of 10 augmented reality games and experiences on Snapchat. The playtime of these lenses was upto 13x more than the baseline (of Snapchat lenses) and recorded over 2 million impressions. Learn more.

- Jigsaw enables an authoring IDE for crafting highly immersive storytelling experiences with AR and IoT devices to leverage virtual and physical augmentations.

- Understanding the role of context study aimed to investigate factors that influence the enjoyment of co-located interactions.

Publications

- Zhang, L; Kim, D; Cho, Y; Robinson, A; Tham, YJ; Vaish, R; Monroy-Hernandez, A. “Jigsaw: Authoring Immersive Storytelling Experiences with Augmented Reality and Internet of Things”, ACM CHI 2024.

- Reig, S; Cruz, E.P.; Powers, M; He, J; Chong, T; Tham, YJ; Kratz, S; Robinson, A; Smith, B.A; Vaish, R; Monroy-Hernandez, A. “Supporting Piggybacked Co-Located Leisure Activities via Augmented Reality”, ACM CHI 2023. (project page)

- Dagan, E; Cardenas Gasca, A; Robinson, A; Noriega, A; Tham, YJ; Vaish, R; Monroy-Hernandez, A. “Project IRL: Playful Co-Located Interactions with Mobile Augmented Reality”, ACM CSCW 2022. (video, project page)

- Liu, C; Smith, B; Vaish, R; Monroy-Hernandez, A. “Understanding the Role of Context in Creating Enjoyable Co-Located Interactions”, ACM CSCW 2021.

AR products for digital handcrafting and collaboration

Digital communication is often brisk and automated. From auto-completed messages to "likes," research has shown that such lightweight interactions can affect perceptions of authenticity and closeness. On the other hand, handcrafted effort in relationships can forge emotional bonds by conveying a sense of caring and is essential in building and maintaining relationships. To explore digital handcraftedness, we leveraged the physicality associated with augmented reality and built a range of products (listed below).

- Auggie iOS app enables ways to create digitally handcrafted AR experiences for each other, centered around crafting a 3D character with photos, animated movements, drawings, and audio/music for someone else. Think creating a TikTok in AR. Check out our Medium post and the video.

- Timecapsule enables a collaborative crafting system for keepsaking where friends can “clone” any object in their space and put it in an AR box to reopen at a later time, as if they were creating a time capsule. This lens was officially launched by Snapchat.

- Blocks on Snapchat enable co-creation of LEGO-like structures between friends. Being one of the first examples of shared AR at Snap, Blocks contributed to the inaugural launch of shared AR experiences on Snapchat with the LEGO Group during Snap Partners Summit 2021. This product and research insights helped unlock the Connected-Lens (multiplayer lenses) initiative across the company. Later, Snap also launched the Block drawing lens as part of their official Connected Lenses collection.

Publications

- Zhang, L; Chen, T; Seow, O; Chong, T; Kratz, S; Tham, YJ; Monroy-Hernandez, A; Vaish, R; Liu, F. “Auggie: Encouraging Effortful Communication through Handcrafted Digital Experiences”, ACM CSCW 2022. (video) Best Paper Award (top 1% of submissions).

- Guo, A; Canberk, I; Murphy, H; Monroy-Hernandez, A; Vaish, R. “Blocks: Collaborative and Persistent Augmented Reality Experiences”, ACM UbiComp 2019, London, UK (video); (Press: The Verge, CNET).

HCI x Product Studies: Answering questions for the Product teams through user research

- Communication shifts during Covid-19 study aimed to understand how people’s usage of Snapchat changed during lockdown so that Snap can adapt to the rapidly changing user behavior. This study was conducted in collaboration with the Data and Computational Social Science team.

- Snapchat public sharing study aimed to understand the reasons and the role of context in sharing content publicly on Snapchat. The design implications around the feedback loop to encourage user generated content (UGC) were CEO approved for productization and the paper written on this research won a best paper honorable mention award at CHI'19.

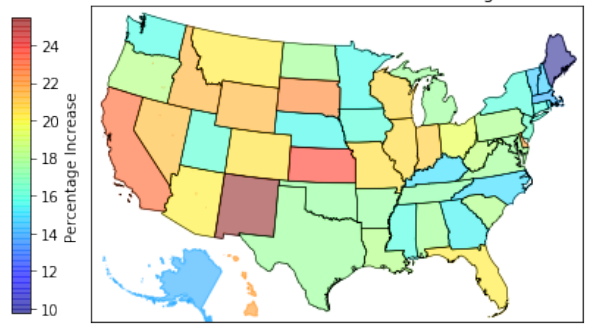

- Curation and moderation of video-based content is a significantly harder problem than analyzing photos or text. Using novel interfaces and combining AI with crowd, our system was able to perform 3x faster than dedicated curators, and its output was of comparable quality. A version of the interface was later implemented and deployed in the internal content moderation tool, where the number of Snaps moderated per day increased by 22% with an accuracy of over 99%. I also helped create a tag-based interface for ad moderation, that was productized and shipped.

Publications

- Teng, Y; Courtien, C; Rios, D; Tseng, Y; Gibson, J; Aziz, M; Reyna, A; Vaish, R; Smith, B.A. “Help Supporters: Exploring the Design Space of Assistive Technologies to Support Face-to-Face Help Between Blind and Sighted Strangers”, ACM CHI 2024. (in collaboration with Columbia University's CEAL Lab)

- Yang, Q; Wang, W; Pierce, L; Vaish, R; Shi, X; Shah, N. “Online Communication Shifts in the Midst of the Covid-19 Pandemic: A Case Study on Snapchat”, AAAI ICWSM 2021.

- Chen, Y; Monroy-Hernandez, A; Wehrman, I; Oney, S; Lasecki, W; Vaish, R. “Sifter: A Hybrid Workflow for Theme-based Video Curation at Scale”, ACM IMX 2020, Barcelona, Spain (video).

- Habib, H; Shah, N; Vaish, R. “Impact of Contextual Factors on Snapchat Public Sharing”, ACM CHI 2019, Glasgow, Scotland. Best Paper Honorable Mention.

Snap Creative Challenge

Launched in 2020, Snap Creative Challenge awarded $100K to academic institutions to explore topics of societal interests using AR. I helped co-found this program and led the 2022 initiative, from realizing the topic to outreach and the selection process. To date, we have supported over 25 institutions from over 10 countries, producing publications at top-tier venues.

- The Future of Moments in AR (2022) explores how people would relive moments in the future that feels like reexperiencing them immersively.

- The Future of Co-located AR (2021) explored new use cases for collaborative AR — utilitarian, games or social. Read our takeaways.

- The Future of Storytelling in AR (2020) x ACM IMX explored new opportunities and experiences for AR storytelling. Read our blog post.

.

Services and other product and academic collaborations at Snap Research

- Guest lectures and judging: UCLA, UC Berkeley, UC San Diego, Columbia University, University of Washington, Virginia Tech, ArtCenter College of Design

- Academic services while being at Snap: Served as CHI Courses Co-chair and Interactivity Jury, ACM FCA Recruiting Co-chair, CSCW AC, UIST AC, IUI PC, HCOMP PC, AAAI PC, WWW PC, ICTD PC, COMPASS PC. Served on the advisory board for Italian Federal grant and NSF grants of faculty members at UCLA and Michigan. Reviewed over 50 papers in AR, social computing and crowdsourcing.

- Fellowship, scholarship and research internship program: Oversaw the launch of the inaugural fellowship and scholarship programs, where I helped design marketing strategies, workshops, and rubric for evaluation.

- Design sprints, outreach, literature review, knowledge transfers and consulting product teams: Collaborated with 10+ product teams ranging from Product Design and Spectacles team to Content and Growth on 30+ topics ranging from creative tools and ecosystems to UI/UX best practices and AR/drone interfaces.

Stanford projects: Built products and communities

crowdresearchinitiative.stanford.edu

aspiringresearchers.soe.ucsc.edu

crowdresearch.stanford.edu

daemo.stanford.edu

wisdomofcrowds.stanford.edu

Stanford Crowd Research: Built the world’s first open online research lab, think Coursera, but for research (MOOR)

Collaborators: Michael Bernstein, Sharad Goel, Geza Kovacs, Ranjay Krishna, Camelia Simoiu, Imanol Arrieta Ibarra at Stanford University. Michael Wilber, Andreas Veit and Serge Belongie at Cornell Tech. James Davis at University of California, Santa Cruz. Snehalkumar (Neil) Gaikwad at MIT Media Lab. Led a community of over 1,500 students worldwide.

The Aspiring Researchers Challenge a.k.a. Stanford Crowd Research is an experiment in massive open online research (MOOR), that explores the possibility of research at scale by connecting an expert with crowd (aspiring researchers). In span of six months, we had more than 1,000 sign ups from people around the world with almost no research experience - more than 90% had never published a paper before, about 25% were female and more than 70% were undergraduates. We developed a weekly structure that helped in coordinating crowd, distribute fair credits, educate and train them about research topics and process. The structure utilized peer review to scale the process, that would include series of research phases like, brainstorming, prototyping, development and user-evaluation. Crowd contributed through engineering, design, paper writing and other core aspects of research.

Through our approach, participants worked on three projects in computer vision, data science and human-computer interaction (HCI) - mentored by professors at Stanford University and UC Santa Cruz. And were able to successfully publish one full paper at ACM UIST 2016, one at ACM CSCW 2017 and three work-in-progress papers at top-tier conferences in computer science - ACM UIST and AAAI HCOMP. Many students have gone on to undergrad and graduate school at MIT, Stanford, UC Berkeley, Carnegie Mellon, Cornell and more. We believe that the project is a first step towards realizing research on a wider scale.

Updates and Impact

- Two crowd-authored, full papers got accepted at ACM UIST 2016, Tokyo, Japan and ACM CSCW 2017, Portland, USA.

- Three crowd-authored, work-in-progress papers got accepted at ACM UIST 2015 and AAAI HCOMP 2015.

- Participants have gone on to undergrad and graduate schools like MIT Media Lab, Stanford, UC Berkeley, Cornell, CMU, University of Minnesota, UC San Diego, UC Santa Cruz, Arizona State University, Georgia Tech and more.

- Two crowd participants from Germany and India offered full time RA positions at Stanford University.

- One paper under preparation for PNAS, one under review for ACM CHI 2017.

- The HCI project got into the finals of Knights News Challenge'15 (top 20 of 1,000+).

- The HCI project (Daemo) - to build the next generation crowd marketplace is about to go live - that may provide earning opportunities to thousands of people, and help science through multiple experiments and tasks that can be hosted.

- Daemo crowdsourcing marketplace has been successfully used to create a dataset of 100,000+ question answer pairs on 500+ Wikipedia articles — a paper based on this work recently won the best paper award at EMNLP 2016.

- Global access provided to more than 1,500 people across 6 continents.

Meta publications - about the process

- Vaish, R, Gaikwad, S, Kovacs, G, Veit, A, Krishna, R, Arrieta Ibarra, I, Simoiu, C, Wilber, M, Belongie, S, Goel, S, Davis, J, Bernstein, M. "Crowd Research: Open and Scalable University Laboratories", ACM UIST 2017, Quebec City, Canada. Best Paper Honorable Mention.

- Vaish, R, Davis, J, Bernstein, M. “Crowdsourcing the Research Process”, Collective Intelligence 2015, Santa Clara, CA.

- Vaish, R. “Crowdsourcing the Research Process”, Doctoral Consortium at AAAI HCOMP 2014, Pittsburgh, PA.

Crowd publications - about the projects crowd researchers worked on

- HCI project - poster paper: Stanford Crowd Research Collective., Vaish, R., Bernstein, M. "Prototype Tasks: Improving Crowdsourcing Results through Rapid, Iterative Task Design". AAAI HCOMP 17, Quebec City, Canada.

- HCI project - full paper: Stanford Crowd Research Collective., Vaish, R., Bernstein, M. "Designing a Constitution for a Self-Governing Crowdsourcing Marketplace ". Collective Intelligence 17, New York, USA.

- HCI project - demo paper: Stanford Crowd Research Collective., Vaish, R., Bernstein, M. "The Daemo Crowdsourcing Marketplace". ACM CSCW 17, Portland, USA.

- HCI project - full paper: Stanford Crowd Research Collective., Vaish, R., Bernstein, M. "Crowd Guilds: Worker-led Reputation and Feedback on Crowdsourcing Platforms". ACM CSCW 17, Portland, USA. (28 co-authors)

- HCI project - full paper: Stanford Crowd Research Collective., Vaish, R., Bernstein, M. "Boomerang: Aligning Worker and Requester Incentives on Crowdsourcing Platforms". ACM UIST 16, Tokyo, Japan. (38 co-authors)

- HCI project: Stanford Crowd Research Collective, Vaish, R, Bernstein, M. “Daemo: a Self-Governed Crowdsourcing Marketplace”, ACM UIST 15, Charlotte, NC. (61 crowd authors)

- Data Science project: Mysore, A. S., .. Vaish, R,.. et al. “Investigating the ‘Wisdom of Crowds’ at Scale”, ACM UIST 2015, Charlotte, NC. (58 crowd authors)

- Computer Vision project: Veit, A., Wilber, M., Vaish, R., Belongie, B., Davis, J., et al. “On Optimizing Human-Machine Task Assignments”. AAAI HCOMP 2015, San Diego, CA. (49 crowd authors)

- Schuster, C, Zhang, B, Vaish, R, Thomas, J, Gomes, P, Davis, J. “RTI Compression for Mobile Devices”, IEEE ICIMu 2014, Kuala Lumpur, Malaysia (Pilot study)

Talk, Posters and Press

- [Press] A Stanford-led platform for crowdsourced research gives experience to global participants, Stanford News, 10/23/17

- [Press] Amazon's Turker Crowd Has Had Enough, Wired, 8/23/17

- [Poster] Crowd Research: HCI+Design Open House for CHI 2016, Stanford University, 5/8/16

- [Poster] Daemo: HCI+Design Open House for CHI 2016, Stanford University, 5/8/16

- [Talk] LeadGenius, Berkeley, CA, 3/1/16

- [Talk] Berkeley Institute of Design Seminar, UC Berkeley, 2/9/16

- [Talks] Multiple mentions in talks by Michael Bernstein at Stanford, CMU, UIUC, MIT Media Lab and elsewhere.

- [Talk and Poster] SRC/ISSDM Symposium with Los Alamos National Lab at UCSC, 10/15

- [Press] The Tragedy of the Digital Commons, The Atlantic, 6/8/15

- [Talk] Stanford University HCI lunch, 5/27/15

- [Press] The Aspiring Researcher Challenge: An Experiment In Massive Open Online Research. UCSC SOE, 5/20/15

- [Talk] Stanford Women in Computer Science’s ‘eCSpress yourself’, 5/15

Student Testimonials/Experiences

- "Thank you for taking on this ambitious project and for letting me be a part of it. I feel privileged. I feel like this group is now a part of my family"

- "This initiative was the best thing that i ever came across as a student not everybody get such a good opportunity to work"

- "This is simply amazing work. I am really glad that I can be part of it. I see how hard it is to be all in different place, time zone and yet solve the same problem. I enjoyed seeing the system go from Zero to Hero"

- "Hats off, Rajan. You did a wonderful job managing the whole thing. Coordination was just great. I'm particularly grateful for your (team's) effort cuz I learnt stuff, first hand, that I might not have had the opportunity to at an undergrad level"

- "Thank you to professor Bernstein and Rajan for letting a high schooler join the project. Both of them are very kind and I enjoy working with them"

Stanford Scholar: Collaborative Video Editing Tool for Producing Research Talks at Scale

Collaborators: Sharad Goel, Amin Saberi at Stanford University. Led a community of over 800 students worldwide.

There are millions of research papers that go unread, some are behind paywall, while some are hard for non-researchers to read and understand. Besides writing papers, researchers lack time and resources to make their work available in other forms or formats. Meanwhile, there's been a shift in consuming information and knowledge through videos. Through this initiative, we're trying to make world's research more accessible - by mobilizing people worldwide to scale the traditional video creation process, and collaboratively create short talks on influential papers - that can be improved/edited by anyone. To accomplish this goal, we're designing a scaffolding process to coordinate the crowd, and building a product to enable collaborative and editable video production. Beyond research talks, we're applying our techniques to develop courses. Eventually, this research can help open-ended media collaboration - from education to journalism, and beyond.

Updates and Publication

- [Paper] Vaish, R., Goyal, S., Saberi, A., Goel, S. "Creating Crowdsourced Research Talks at Scale", WWW 2018, Lyon, France.

- [Paper] Vaish, R., Goel, S., Saberi, A. "Mobilizing the Crowd To Create an Open Repository of Research Talks", ACM Learning@Scale 2017 (Work-in-Progress), MIT, Cambridge, MA.

- [Talk] Stanford Postdoc Research Symposium, 12/6/2016.

- [Numbers] 1000+ strong community, 115,000+ views, 150+ videos.

- [Progress] 8 research talks completed, based on best papers at WWW 2015 and WWW 2016.

- [Progress] 4 short courses completed, in multiple languages.

- [Talk] Social Computing Lab, Stanford University, 5/23/16.

Testimonials

- "I had always wanted to read through research papers on hot topics of computer science. But I could never get started. This program not only inspires me to read through a paper but requires me to understand it enough, so as to create a talk on it. And learning is always fun when more people are learning with us." - from a student from India.

- "Thanks again for your efforts on our behalf. You and your team are providing a great public service to science." - from a professor from Kellogg School of Management, U. Penn.

- "The talk is extremely thorough despite its brevity. Its certainly better than the talk I gave at WWW." - from a researcher at Wikimedia Research, San Francisco, CA.

- "Thank you so much for the reference and opportunity to join this initiative Rajan. I’ve been taking courses for so long but being able to create my own and help out with education was very special to me." - a high school student who recently got accepted to Stanford's class of 2021.

Twitch Crowdsourcing App: Crowd Contributions in Short Bursts of Time

Collaborators: Michael Bernstein, Keith Wyngarden, Jingshu Chen, Brandon Cheung at Stanford University.

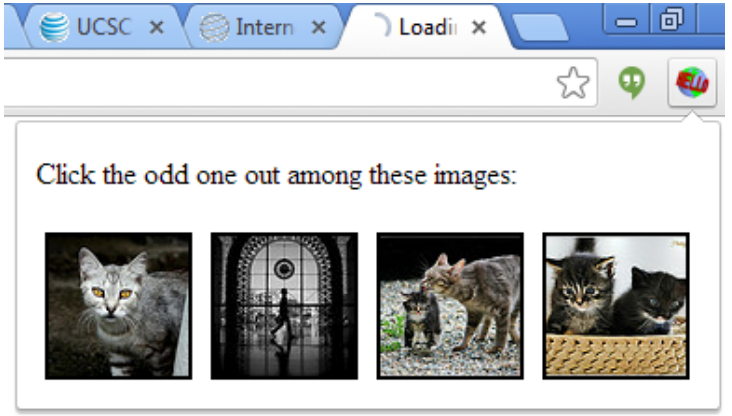

To lower the threshold to participation in crowdsourcing, we present twitch crowdsourcing: crowdsourcing via quick contributions that can be completed in one or two seconds. We introduce Twitch, a mobile phone application that asks users to make a micro-contribution each time they unlock their phone. Twitch takes advantage of the common habit of turning to the mobile phone in spare moments. Twitch crowdsourcing activities span goals such as authoring a census of local human activity, rating stock photos, and extracting structured data from Wikipedia pages. At the time of CHI’14 paper submission, 82 users made 11,240 crowdsourcing contributions as they used their phone in the course of everyday life. After six months of deployment, over 100,000 contributions were registered. The median Twitch activity took just 1.6 seconds, incurring no statistically distinguishable costs to unlock speed or cognitive load compared to a standard slide-to-unlock interface.

Publication

- Vaish, R, Wyngarden, K, Cheung, B, Bernstein, M. “Twitch Crowdsourcing: Crowd Contributions in Short Bursts of Time”, CHI’14, Toronto, Canada.

Talks, Posters, Press and Achievements

- [Talk] Stanford University HCI lunch, 6/19/13

- [Talk] MIT CSAIL, 7/16/13

- [Talk and poster] Stanford MobiSocial Retreat, 10/5/13 http://mobisocial.stanford.edu/retreat13/

- [Poster] UC Santa Cruz Research Review Day, 10/17/13

- [Press] What’s new in digital and social media research: Crowdsourcing, analytics, Twitter patterns, product ratings, Harvard’s Journalist’s Resource, 5/14

- [Press] The Next Frontier in Crowdsourcing: Your Smartphone, MIT Technology Review, 3/14

- [Press] Crowdswiping, Stanford The Dish Daily, 2/14

- [Press] Crowdsourcing with a swipe of your finger, Santa Cruz Sentinal/San Jose Mercury, 2/14

- [Press] Crowdsourcing Twitch app could turn swipes into cash, NewScientist, 1/14

- [Achievement] Michael Bernstein was awarded the Google Faculty Grant for Twitch crowdsourcing proposal.

Microsoft Research, IBM Research, Palo Alto Research Center (PARC), University of California projects

Crowd-Powered Tone Improvement System for Emails

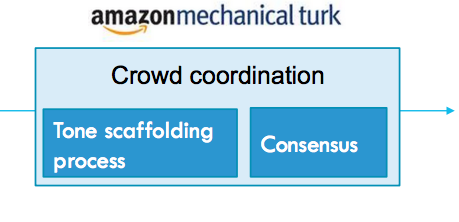

Collaborators: Andrés Monroy-Hernández and Jaime Teevan at Microsoft Research Redmond.

Communicating a message with right tone is extremely important, misinterpretation of which can cause confusion or unexpected reaction and response. However, people often fail to express the right tone in their messages due to a lack of skills or the wrong assumptions. Can we help them? There are several automated solutions to detect the tone and identify shortcomings, like IBM's Tone Analyzer and ToneCheck. However, their accuracy and reliability if often doubtful. Also, these applications can give recommendations, but cannot fix the tone of the message or text. As part of our project, our system relies on crowd intelligence to improve the tone of emails. The backend of our system is powered by MTurk, where crowd follows a systematically designed and scaffolded workflow. The system inputs email content and context, and outputs improved email. Based on a study on 29 emails, we found that more than 90% of the emails went through some or significant improvements.

Talk and Publication

- [Talk] Microsoft Research Redmond, 9/15

- [Paper] Vaish, R, Monroy-Hernández, A. "CrowdTone: Crowd-powered tone feedback and improvement system for emails", MSR-TR-2017-1. Also, available on ArXiV.

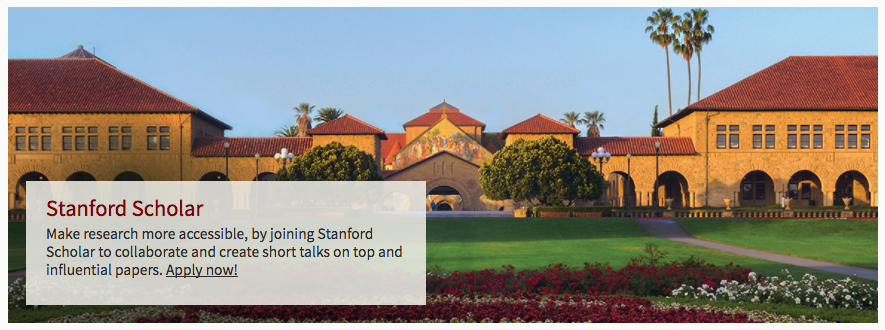

The Whodunit Challenge: Mobilizing the Crowd in India

Collaborators: Bill Thies and Ed Cutrell at Microsoft Research India. Aditya Vashistha at University of Washington.

While there has been a surge of interest in mobilizing the crowd to solve large-scale time-critical challenges, to date such work has focused on high income countries and Internet-based solutions. In developing countries, approaches for crowd mobilization are often broader and more diverse, utilizing not only the Internet but also face-to-face and mobile communications. The Whodunit Challenge is first of its kind social mobilization contest to be launched in India. The contest enabled participation via basic mobile phones and required rapid formation of large teams in order to solve a fictional mystery case. The challenge encompassed 7,700 participants in a single day and was won by a university team in about 5 hours. To understand teams strategies and experiences, we conducted 84 phone interviews. While the Internet was an important tool for most teams, in contrast to prior challenges we also found heavy reliance on personal networks and offline communication channels.

Publication

- Vashistha, A, Vaish, R, Cutrell, E, Thies, W; “The Whodunit Challenge: Mobilizing the Crowd in India”; INTERACT 2015, Bamberg, Germany. (Equal contributions by Vashistha and Vaish).

Press - sadly, few links are not live anymore

- Microsoft to test social tech in India, Times of India, 2/13

- Microsoft’s social ‘Whodunit’ competition to begin in India, Yahoo! News, 2/13

- From Computing Research to Surprising Inventions (Peter Lee, head of MSR, launching the Whodunit? Challenge at TechFest India), Microsoft Research, 2/13

- Microsoft India Announces A Nationwide Social Gaming Competition, Silicon India, 1/13

- Social whodunnit competition launches in India, NewScientist, 1/13

- More press by Business Standard, CNN IBN, ACM.org, IIIT-Delhi and more...

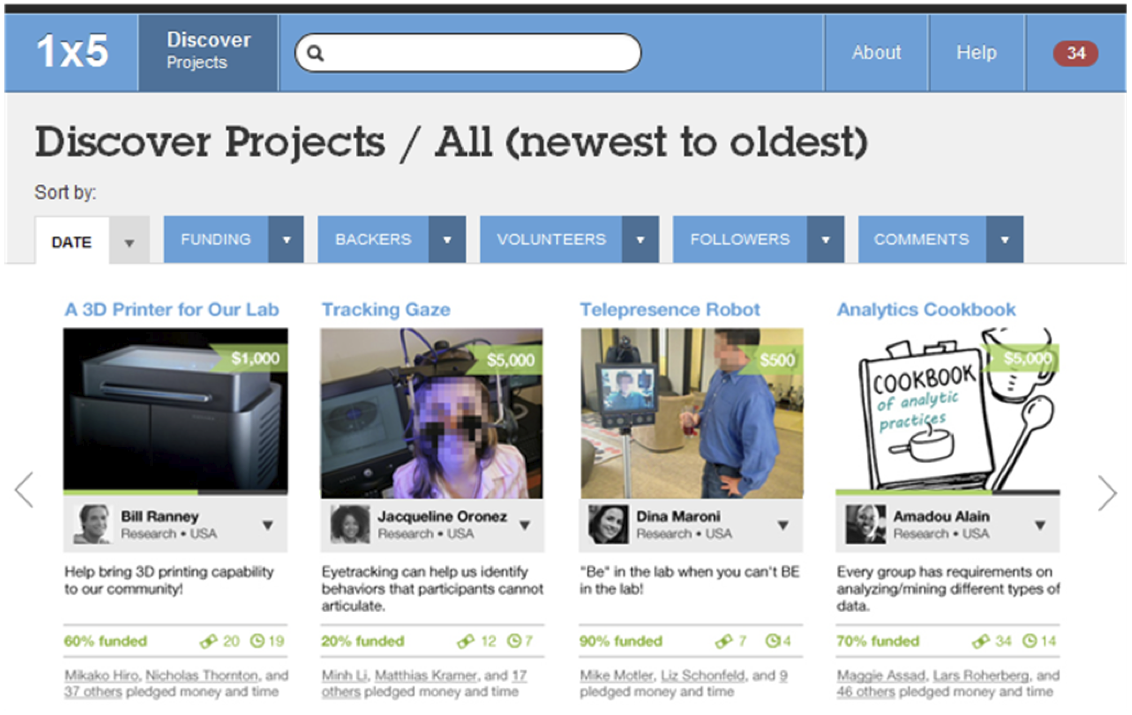

Internet vs. Enterprise Crowdfunding: Contrasting Motivations and Dynamics

Collaborators: Michael Muller, Werner Geyer and Todd Soule at IBM T.J. Watson Research, Cambridge, MA.

In this project we contrast crowdfunding as it occurs on the Internet, with crowdfunding in an Enterprise setting, based on a grounded theory analysis of a crowdfunding trial at IBM Research Almaden. We explore themes of diverse projects, motivations and incentives, strategies and approaches, and collaborations and relationships. Enterprise crowdfunding has its own financial model, social scope, and dynamics, resulting from a heightened sense of collaboration and community. This project helps us learn about the implications for organizations and for future crowdfunding activities.

Publication

- Vaish, R, Muller, M, Geyer, W, Soule, T. “Crowdfunding in the Enterprise and on the Internet: Workplace Users Emphasize Collaboration and Sociality”, Research Report RC25535, IBM Research 2015.

Talk, Poster and Press

- [Talk and Poster] InternFest’13, IBM Research, Cambridge, MA.

- [Press] IBM discovers its inner Kickstarter via enterprise crowdfunding, Network World, 8/13.

- [Press] Can Internal Crowdfunding Help Companies Surface Their Best Ideas?, Harvard Business Review, 9/13.

Peerworthy: Motivating Participation in Prosocial Peer-to-Peer Services

Collaborators: Victoria Bellotti at Palo Alto Research Center (PARC). Vera Liao at Univ. at Illinois Urbana-Champaign.

Internet is embracing peer-to-peer services, and the "sharing economy" they create. This project aims at exploring how to motivate people to join prosocial p2p services that do not involve monetary rewards. We carried out qualitative studies with users of existing online P2P services and offline peer-production groups to uncover A wide range of motivations. We conducted a field experiment with a P2P curating platform we created to compare user recruiting strategies that leverage these motivatiors. A full paper was accepted at the International Journal of Human-Computer Studies.

Publication

- Vaish, R, Liao, Q. V., Bellotti, V. "What’s in it for me? Self-serving versus other-oriented framing in messages advocating use of prosocial peer-to-peer services", Elsevier International Journal of Human-Computer Studies V109.

Talk and Poster

- [Talk] Palo Alto Research Center, 10/14

- [Poster] Palo Alto Research Center, 7/14

- Publication under review for Elsevier International Journal of Human-Computer Studies.

Exploring employment opportunities through microtasks via cybercafés

Collaborators: James Davis at U.C. Santa Cruz. Mrunal Gawade at Centrum Wiskunde & Informatica, Amsterdam.

Microwork in cybercafés is a promising tool for poverty alleviation. For those who cannot afford a computer, cybercafés can serve as a simple payment channel and as a platform to work. However, there are questions about whether workers are interested in working in cybercafés, whether cybercafé owners are willing to host such a set up, and whether workers are skilled enough to earn an acceptable pay rate? We designed experiments in internet/cyber cafes in India and Kenya to investigate these issues. We also investigated whether computers make workers more productive than mobile platforms? In surveys, we found that 99% of the users wanted to continue with the experiment in cybercafé, while 8 of 9 cybercafé owners showed interest to host this experiment. User typing speed was adequate to earn a pay rate comparable to their existing wages, and the fastest workers were approximately twice as productive using a computer platform.

Publications

- Gawade, M, Vaish, R, Waihumbu, M. N, Davis, J; “Exploring Employment Opportunities through Microtasks via Cybercafés”; IEEE Global Humanitarian Technology Conference 2012, Seattle, WA.

- Gawade, M, Vaish, R, Waihumbu, M. N, Davis, J; “Exploring microwork opportunities through cybercafes”; ACM DEV 2012, Atlanta, GA. Work-in-progress.

Poster and Achievements

- [Achievement] Semi-finalist at the UC Berkeley Global Social Venture Competition 2012.

- [Poster] UC Berkeley CITRIS Retreat 2013, Berkeley, CA.

Other projects I pursued during my PhD

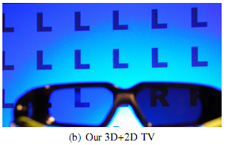

3D+2D TV: 3D Displays with No Ghosting for Viewers Without Glasses

3D displays are increasingly popular in consumer and commercial applications. Many such displays show 3D images to viewers wearing special glasses, while showing an incomprehensible double image to viewers without glasses. We demonstrate a simple method that provides those with glasses 3D experience, while viewers without glasses see a 2D image without artifacts.

3D+2D TV project home site, SIGGRAPH site

Digitization of Health Records in Rural Villages

In collaboration with PAMF, we present a study that reviews current available methods for obtaining electronic health records (EHRs) to facilitate the provision of health services to patients from rural villages in developing countries. The study compares processes of digitizing health records by means of manual transcription, both by hiring a professional transcriptionist and by using online crowdsourcing platforms. Finally, a cost-benefit analysis is conducted to compare the studied transcription methods to an alternate technology-based solution that was developed to support in-the-field direct data entry.

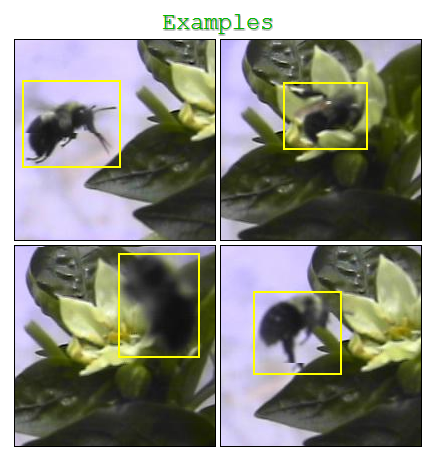

Using crowdsourcing to generate ground truth data for computer vision training

This project was conducted in collaboration with LANL. Computer vision is great, but at times it fails too. To train the algorithms, usually researchers spend several hours annotating images to create ground truths. Why not harness the crowd here? That’s what we’re trying to do, several experiments have been crowdsourced on Mechanical Turk and MobileWorks. The experiment was conducted on two types of data sets, namely: Pedestrians and Bumble-bees.

Low Effort Crowdsourcing: Leveraging Peripheral Attention for Crowd Work - at CrowdCamp at HCOMP'13, with Jeff Bigham and Haoqi Zhang.

Crowdsourcing systems leverage short bursts of focused attention from many contributors to achieve a goal. By requiring people’s full attention, existing crowdsourcing systems fail to leverage people’s cognitive surplus in the many settings for which they may be distracted, performing or waiting to perform another task, or barely paying attention. In this project, we study opportunities for loweffort crowdsourcing that enable people to contribute to problem solving in such settings. We discuss the design space for low-effort crowdsourcing, and through a series of prototypes, demonstrate interaction techniques, mechanisms, and emerging principles for enabling low-effort crowdsourcing

Before grad school: Older projects and stuff - Class projects, OLPC project, OpenStreetMap project, Yahoo! Open Hack, NASA WorldWind add-on, AOL/Truveo Google Gadges, Microsoft Imagine Cup etc.

Publications and Patents

- Organisciak, P, Vaish, R. "Accomplishing low-attention microtasks", Productivity Decomposed: Getting Big Things Done with Little Microtasks, ACM CHI Workshop 2016, San Jose, CA.

- Scher, S, Liu, J, Vaish, R, Gunawardane, P, Davis, J. “3D+2DTV: 3D Displays with No Ghosting for Viewers without Glasses”, ACM Transaction on Graphics (TOG) 2012.

- Vaish, R, Ishikawa, S, Liu, J, Berkey, S, Strong, P, Davis, J. “Digitization of Health Records in Rural Villages”, IEEE Global Humanitarian Technology Conference 2013, San Jose, CA.

- Vaish, R, Ishikawa, S, Lundquist, S, Porter, R, Davis, J. “Human Computation for Object Detection”, Tech Report UCSC-SOE-15-03, School of Engineering, University of California Santa Cruz.

- Vaish, R, Organisciak, P, Hara, K, Bigham, J, Zhang, H. “Low-effort Crowdsourcing: leveraging peripheral attention for crowd work”, AAAI HCOMP 2014, Pittsburgh, PA [Work-in-progress and Demo].

- James Davis, Steven Scher, Jing Liu, Rajan Vaish, Prabath Gunawardane, "Simultaneous 2D and 3D Images on a Display”, US Patent 2013032821; 2013.

Press

- 3D+2D TV: A 3D display that’s watchable without glasses, without ghosting, Extreme Tech, 6/13.

- UCSC Researchers Develop Display for Both 3D, 2D Viewing, India West, 10/13

- 3D TV faces uncertain future, MSN, 8/13.

- Researchers Develop Ghost-Free 3D For Viewers Not Wearing Glasses, Gizmodo, 7/13.

- UCSC Researchers Develop 3D Display With No Ghosting for Viewers Without Glasses, ACM Comm., 7/13.

IRL games on Snapchat

IRL games on Snapchat IRL games on Snapchat

IRL games on Snapchat